If you have been using Linux for a while you might have encountered the term “environment variables” a few times. You might even have run the command export FOO=bar occasionally. But what are environment variables really and how can you master them?

In this post I will go through how you can manipulate environment variables both permanently and temporarily. Lastly I will round up with some tips on how to properly use environment variables in Ansible.

Check your environment

So what is your environment? You can inspect it by running env on the command line and search with a simple grep:

$ env

COLORTERM=truecolor

DBUS_SESSION_BUS_ADDRESS=unix:path=/run/user/1000/bus

DESKTOP_SESSION=gnome

DISPLAY=:1

GDMSESSION=gnome

GDM_LANG=en_US.UTF-8

GJS_DEBUG_OUTPUT=stderr

GJS_DEBUG_TOPICS=JS ERROR;JS LOG

... snip ...

$ env | grep -i path

DBUS_SESSION_BUS_ADDRESS=unix:path=/run/user/1000/bus

OMF_PATH=/home/ephracis/.local/share/omf

PATH=/usr/local/bin:/usr/local/sbin:/usr/bin:/usr/sbin

WINDOWPATH=2

So what are all these variables coming from and how can we change them or add more, both permanently and temporarily?

Know your session

Before we can talk about how the environment is created and populated, we need to understand how sessions work. Different kinds of sessions reads different files for populating their environment.

Login shells

Login shells are created when you SSH to the server, or login at the physical terminal. These are easy to spot since you need to actually log in (hence the name) to the server in order to create the session. You can also identify these sessions by noting the small dash in front of the shell name when you run ps -f:

$ ps -f

UID PID PPID C STIME TTY TIME CMD

ephracis 23382 23375 0 10:59 pts/0 00:00:00 -fish

ephracis 23957 23382 0 11:06 pts/0 00:00:00 ps -f

Interactive shells

Interactive shells are the ones that reads your input. This means most sessions that you, the human, are working with. For example every tab in your graphical terminal app is an interactive shell. Note that this means that the session created when you login to your server either over SSH or from the physical terminal, is both an interactive and a login shell.

Non-interactive shells

Apart from interactive shells (of which login shells are a subset) we have non-interactive shells. These would be the ones created from various scripts and tools that do not attach anything to stdin and can thus not provide interactive input to the session.

Know your environment files

Now when we know about the different types of sessions that can be created, we can start to talk about how the environment of these sessions are populated with variables. On most systems we use Bash since that’s the default shell on virtually all distributions. But you might have changed this to some other shell like Zsh or Fish, especially on your workstation where you spend most of your time. What kind of shell you use will determine which files are used to populate the environment.

Bash

Bash will look for the following files:

/etc/profile

Run for login shells.~/.bash_profile

Run for login shells./etc/bashrc

Run for interactive, non-login shells.~/.bashrc

Run for interactive, non-login shells.

That part about non-login is important and a reason why many users and distributions configure bash_profile to read bashrc so that it is applied in all sessions, like so:

[[ -r ~/.bashrc ]] && . ~/.bashrc

Zsh

Zsh will look for a bit more files than Bash does:

/etc/zshenv

Run for every zsh shell.~/.zshenv

Run for every zsh shell./etc/zprofile

Run for login shells.~/.zprofile

Run for login shells./etc/zshrc

Run for interactive shells.~/.zshrc

Run for interactive shells./etc/zlogin

Run for login shells.~/.zlogin

Run for login shells.

Fish

Fish will read the following files on start up:

/etc/fish/config.fish

Run for every fish shell./etc/fish/conf.d/*.fish

Run for every fish shell.~/.config/fish/config.fish

Run for every fish shell.~/.config/fish/conf.d/*.fish

Run for every fish shell.

As you can see Fish does not distinguish between login shell and interactive shells when it reads its startup files. If you need to run something only on login or interactive shells you can use if status --is-login or if status --is-interactive inside your scripts.

Manipulate the environment

So that’s a bit complicated but hopefully things are more clear now. Next step is to start manipulating the environment. First of all, you can obviously edit those files and wait until the next session is created, or load the newly edited file into your current session using either source /path/to/file or the shorthand . /path/to/file. That would be the way to make permanent changes to your environment. But sometimes you only want to change this temporarily.

To apply variables for a single command you just insert it to the beginning of the command like so:

# for bash or zsh

$ FOO=one BAR=two my_cool_command ...

# for fish

$ env FOO=one BAR=two my_cool_command ...

This will make the variable available for the command, and then go away as soon as the command finishes.

If you want to keep the variable and have it available to all future commands in your session you run the assignment as a stand alone command like so:

# for bash or zsh

$ FOO=one

# for fish

$ set FOO bar

# then use it later in your session

$ echo $FOO

one

As you can see the variable is available for the echo command run later in the session. The variable will not be available to other sessions, and will disappear when the current session ends.

Finally, you can export the variable to make it available to subprocess that are spawned from the session:

# for bash or zsh

[parent] $ export FOO=one

# for fish

[parent] $ set --export FOO one

# then spawn a subsession and access the variable

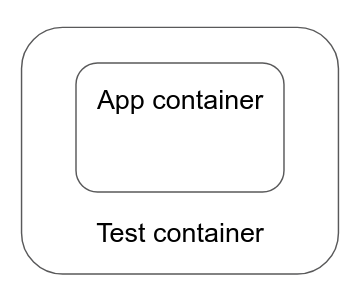

[parent] $ bash

[child] $ echo $FOO

one

What about Ansible

If you are running Ansible to orchestration your servers you might ask your self what kind of session that is and what files you should change to manipulate the environment Ansible uses on the target servers. While you could go down that road, a much simpler approach is to use the environment keyword in Ansible:

- name: Manipulating environment in Ansible

hosts: my_hosts

# play level environment

environment:

FOO: one

BAR: two

tasks:

# task level environment

- name: My task

environment:

FOO: uno

some_module: ...

This can be combined with vars, environment: "{{ my_environment }}", allowing you to use group vars or host vars to adapt the environment for different servers and scenarios.

Conclusion

The environment in Linux is a complex beast but armed with the knowledge above you should be able to tame it and harvest its powers for your own benefit. The environment is populated by different files depending on the kind of session and shell used. You can temporarily set variable from a one shot command, or for the remaining duration of the session. To make subshells inherit a variable use the export keyword/flag.

Lastly, if you are using Ansible you should really look into the environment keyword before you start to experiment with the different profile and rc-files on the target system.